How to import a CSV file into a table and Export a CSV file into a table ? Give appropriate example.

SOLUTION....

1) MySQL / MariaDB

Example table & sample CSV

Table schema:

CREATE TABLE employees ( id INT, name VARCHAR(100), dept VARCHAR(50), hire_date DATE, salary DECIMAL(10,2) );

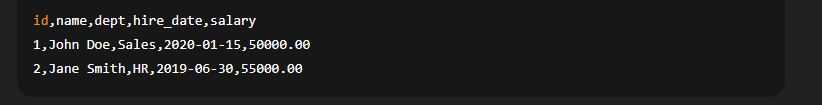

Sample CSV (employees.csv):

Import CSV into table (server-side file)

LOAD DATA INFILE '/path/on/server/employees.csv' INTO TABLE employees FIELDS TERMINATED BY ',' OPTIONALLY ENCLOSED BY '"' LINES TERMINATED BY '\n' IGNORE 1 LINES (id, name, dept, @hire_date, salary) SET hire_date = STR_TO_DATE(@hire_date, '%Y-%m-%d');

IGNORE 1 LINESskips the header row.Use

@variablefor columns you need to transform (dates, trimming, NULL handling).LOAD DATA INFILEreads a file on the DB server and requires file permissions andsecure_file_privsettings.

Import CSV from client machine (LOCAL)

LOAD DATA LOCAL INFILE '/path/on/client/employees.csv' INTO TABLE employees FIELDS TERMINATED BY ',' OPTIONALLY ENCLOSED BY '"' LINES TERMINATED BY '\n' IGNORE 1 LINES (id, name, dept, @hire_date, salary) SET hire_date = STR_TO_DATE(@hire_date, '%Y-%m-%d');

LOCALuploads from the client machine; server must allowLOCALand client lib must support it.

Export table to CSV (server-side file)

MySQL SELECT ... INTO OUTFILE writes to the server filesystem:

SELECT 'id','name','dept','hire_date','salary' UNION ALL SELECT id, name, dept, DATE_FORMAT(hire_date, '%Y-%m-%d'), salary FROM employees INTO OUTFILE '/var/lib/mysql-files/employees_export.csv' FIELDS TERMINATED BY ',' OPTIONALLY ENCLOSED BY '"' LINES TERMINATED BY '\n';

UNION ALLwith a first row is a common workaround to include a header (MySQLINTO OUTFILEcannot add headers by option)./var/lib/mysql-filesis a commonsecure_file_privallowed path — check yoursecure_file_privvariable.

Common MySQL pitfalls & tips

Check

SHOW VARIABLES LIKE 'secure_file_priv';— it may restrict OUTFILE path.Use correct

LINES TERMINATED BYfor Windows ('\r\n') vs Unix ('\n').If dates are not in DB format, parse with

STR_TO_DATE.For large imports, disable indexes, wrap in a transaction, then re-enable for speed.

2) PostgreSQL

Same employees.csv example (with header)

Server-side import (server file)

COPY employees(id, name, dept, hire_date, salary) FROM '/path/on/server/employees.csv' WITH (FORMAT csv, HEADER true, DELIMITER ',', ENCODING 'UTF8');

Client-side import (psql client) — common approach

\copy employees(id, name, dept, hire_date, salary) FROM 'employees.csv' CSV HEADER;

\copyruns in thepsqlclient and reads a client-side file;COPYreads server-side file.

Export to CSV (server-side)

COPY ( SELECT id, name, dept, TO_CHAR(hire_date,'YYYY-MM-DD') AS hire_date, salary FROM employees ) TO '/path/on/server/employees_export.csv' WITH (FORMAT csv, HEADER true, DELIMITER ',', ENCODING 'UTF8');

PostgreSQL tips

HEADER trueautomatically includes column names.Use

\copywhen you cannot access server filesystem.Handle nulls with

NULL ''in COPY options if needed.

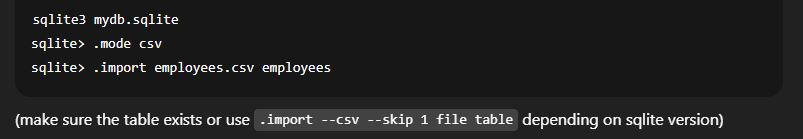

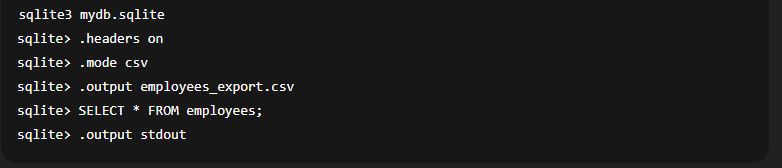

3) SQLite (cli)

Import CSV

Export CSV

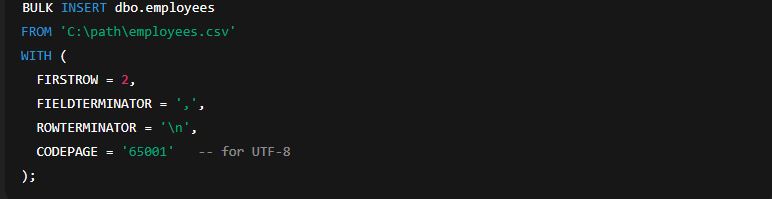

4) SQL Server (MSSQL)

Import CSV using BULK INSERT

5) Important common concerns (short checklist)

Headers: MySQL uses

IGNORE 1 LINES; PostgreSQL hasHEADER true.File location: Server-side tools write/read server filesystem. Use client-side

LOCAL/\copy/ sqlite client if file resides on client.Permissions: DB server must have read/write permissions for the file path and

secure_file_privrestrictions observed.Delimiters & quoting: Specify

FIELDTERMINATED BY,OPTIONALLY ENCLOSED BY '"'(MySQL) orDELIMITER ','+ proper quoting (Postgres).Line endings: Use

\r\nfor Windows files if needed.Encoding: Ensure file encoding (UTF-8) matches DB expectations to avoid mojibake.

Data formatting: Convert dates/booleans/decimal formats as needed (use

STR_TO_DATE,TO_CHAR, CAST, or load into text columns then transform).NULL values: Decide how empty fields map to NULL; some COPY/LOAD options allow explicit

NULLstring.Large imports: Wrap in a transaction, disable indexes/foreign keys temporarily, bulk-load (LOAD DATA / COPY) for speed.

Backup first: Always backup before large imports or destructive operations.